Noob While Loop Only Reads Last Line Correctly

I'm trying to loop through newlines in a file and print both its text and row count.

However, it only shows correctly on the last line, probably because of

Code:

i=0

while IFS= read -r line; -n "$line" ; do

echo "$line" "$i"

i=$((i + 1))

done < "$1"

Text:

Apple

Banana

Coconut

Durian

Need:

Apple 0

Banana 1

Coconut 2

Durian 3

Error:

0ple

1nana

2conut

Durian 3

https://redd.it/10cw5ap

@r_bash

I'm trying to loop through newlines in a file and print both its text and row count.

However, it only shows correctly on the last line, probably because of

[ -n "$line" ]. I noticed that switching around "$line" "$i" would fix this error, but I need it to be formatted like the code since I'm passing the result into another command.Code:

i=0

while IFS= read -r line; -n "$line" ; do

echo "$line" "$i"

i=$((i + 1))

done < "$1"

Text:

Apple

Banana

Coconut

Durian

Need:

Apple 0

Banana 1

Coconut 2

Durian 3

Error:

0ple

1nana

2conut

Durian 3

https://redd.it/10cw5ap

@r_bash

reddit

[Noob] While Loop Only Reads Last Line Correctly

I'm trying to loop through newlines in a file and print both its text and row count. However, it only shows correctly on the last line, probably...

Make Neofetch update hard-linked image

TL;DR How can I make Neofetch update a hard-linked image without reboot?

So I have Neofetch configured to display an image which it does perfectly. The image is a graph, which is created / overwritten by a python noscript which I update daily. The image in the directory displays perfectly up-to-date, while Neofetch is still using an out-of-date version of the graph / image.

Any tipps how I could make Neofetch update the image? Is it stored somewhere for Neofetch to use, cause if so I could probably integrate deleting such backup in my noscript.

SOLVED:

Typical case of feeling stupid, just minutes after posting, where the question already contains the path to the answer:

in the neofetch config we find the path to the thumbnails folder home/user/.cache/thumbnails/neofetch

Of course after wiping the contents of the folder, a new instance of neofetch will get a fresh version of the image and everything works smoothly. I will integrate deleting the content of the thumbnails folder into my noscript and everything will be fine.

Hope this helps anybody though

https://redd.it/10da4sh

@r_bash

TL;DR How can I make Neofetch update a hard-linked image without reboot?

So I have Neofetch configured to display an image which it does perfectly. The image is a graph, which is created / overwritten by a python noscript which I update daily. The image in the directory displays perfectly up-to-date, while Neofetch is still using an out-of-date version of the graph / image.

Any tipps how I could make Neofetch update the image? Is it stored somewhere for Neofetch to use, cause if so I could probably integrate deleting such backup in my noscript.

SOLVED:

Typical case of feeling stupid, just minutes after posting, where the question already contains the path to the answer:

in the neofetch config we find the path to the thumbnails folder home/user/.cache/thumbnails/neofetch

Of course after wiping the contents of the folder, a new instance of neofetch will get a fresh version of the image and everything works smoothly. I will integrate deleting the content of the thumbnails folder into my noscript and everything will be fine.

Hope this helps anybody though

https://redd.it/10da4sh

@r_bash

reddit

Make Neofetch update hard-linked image

TL;DR How can I make Neofetch update a hard-linked image without reboot? So I have Neofetch configured to display an image which it does...

Bash Scripting - If statement always returns truthy value

Hi all,

I have been facing an issue with my bash noscript where the "if" statement always returns true, even when the condition is not met. Here is the noscript:

Could anyone please help me understand why this is happening and how to fix it?

Thank you

https://redd.it/10d9712

@r_bash

Hi all,

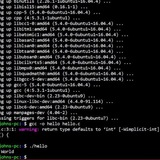

I have been facing an issue with my bash noscript where the "if" statement always returns true, even when the condition is not met. Here is the noscript:

#!/bin/bash

#Required to be able to use "nvm" in a shell noscript

export NVM_DIR=$HOME/.nvm;

source $NVM_DIR/nvm.sh;

# Check if Node is already at the latest version

CURRENT_NODE_VERSION=$(nvm current)

LATEST_NODE_VERSION=$(nvm ls-remote --lts | grep 'Latest LTS: Hydrogen' | awk '{print $2}')

if [ "$CURRENT_NODE_VERSION" != "$LATEST_NODE_VERSION" ]; then

echo "Updating Node"

nvm install --lts=Hydrogen --reinstall-packages-from="$CURRENT_NODE_VERSION"

nvm use --lts

else

echo "Node is already up to date"

fi

# Check if npm is already at the latest version

echo "Updating npm global packages"

npm update -g --depth 0

Could anyone please help me understand why this is happening and how to fix it?

Thank you

https://redd.it/10d9712

@r_bash

reddit

Bash Scripting - If statement always returns truthy value

Hi all, I have been facing an issue with my bash noscript where the "if" statement always returns true, even when the condition is not met. Here is...

question, how to make sure that line of command will not stop the rest of noscript .

there && that make sure the next command will run either the earlier one is executed or not.

i need to put like of two command , how to make sure then those two command 'all in 1 line' if they not executed , the rest of the noscript will keep continue and not affected by this line ?

for instance a line with 'date && cal' .

if those by any chance not executed how to make sure the rest of the noscript will continue ?

should i add what at the end of the line or just as it is will not halt the rest of noscript weather such a line like this 'COMMAND && COMMAND' executed or not ?

https://redd.it/10dhdcg

@r_bash

there && that make sure the next command will run either the earlier one is executed or not.

i need to put like of two command , how to make sure then those two command 'all in 1 line' if they not executed , the rest of the noscript will keep continue and not affected by this line ?

for instance a line with 'date && cal' .

if those by any chance not executed how to make sure the rest of the noscript will continue ?

should i add what at the end of the line or just as it is will not halt the rest of noscript weather such a line like this 'COMMAND && COMMAND' executed or not ?

https://redd.it/10dhdcg

@r_bash

reddit

question, how to make sure that line of command will not stop the...

there && that make sure the next command will run either the earlier one is executed or not. i need to put like of two command , how to make sure...

Why can't I operate on the return of my ssh call using the eq comparison operator?

Hello, I've got a problem with using the return of an ssh call and comparing it with numbers and thought maybe someone can help me.

I have two different calls that I make in a noscript, one of them is a mkdir call like this:

RET=

And the other one does the following

RET=

When I now call $?, I will get a 0 in both cases if the command has been executed successfully, but if I want to compare that 0 with the eq (or -eq) operator, I get an error in the first command, but not in the second.

I get that -eq is used to compare numbers, which I assumed is what is getting returned.

Is there any explanation that I'm not seeing why the first command isn't return such a number but rather a string?

If I use the != operator, I can do the comparison no problem, but not with eq.

Any ideas?

https://redd.it/10dg0s2

@r_bash

Hello, I've got a problem with using the return of an ssh call and comparing it with numbers and thought maybe someone can help me.

I have two different calls that I make in a noscript, one of them is a mkdir call like this:

RET=

ssh user@system -t "mkdir -p <path>" And the other one does the following

RET=

ssh user@system -t "ls -l <path>" When I now call $?, I will get a 0 in both cases if the command has been executed successfully, but if I want to compare that 0 with the eq (or -eq) operator, I get an error in the first command, but not in the second.

I get that -eq is used to compare numbers, which I assumed is what is getting returned.

Is there any explanation that I'm not seeing why the first command isn't return such a number but rather a string?

If I use the != operator, I can do the comparison no problem, but not with eq.

Any ideas?

https://redd.it/10dg0s2

@r_bash

reddit

Why can't I operate on the return of my ssh call using the eq...

Hello, I've got a problem with using the return of an ssh call and comparing it with numbers and thought maybe someone can help me. I have two...

Is there a good way to programmatically determine how many inputs some function can support?

WHAT I WANT TO DO

Programs like

sha256sum file1 file2 file3 ...

or

find ./ -type f | xargs -P<N> sha256sum

Other programs, for example,

basename file1 file2 file3 ...

nor

find ./ -type f | xargs -P<N> basename

instead, you have to use

for file in file1 file2 file3 ...; do

basename "$file

done

or

find ./ -type f | xargs -l1 -P<N> basename

Im trying to figure out a good way to determine, for some generic function, which of the above is the case (and in the "multiple inputs" case what the maximum number of inputs is) without requiring any user interaction.

Any good way to do this?

WHY

I put together a shell function for parallelizing loops called forkrun. Instead of forking off individual processes it initially forks off

Right now it sends 1 input at a time to the worker coprocs, making it equivalent to

https://redd.it/10dtkao

@r_bash

WHAT I WANT TO DO

Programs like

sha256sum accept a list of inputs and will apply whatever the function does (e.g., compute the sha256sum) to each (non-option) input independently. You can do, for example:sha256sum file1 file2 file3 ...

or

find ./ -type f | xargs -P<N> sha256sum

Other programs, for example,

basename, only support 1 non-option input. you cant usebasename file1 file2 file3 ...

nor

find ./ -type f | xargs -P<N> basename

instead, you have to use

for file in file1 file2 file3 ...; do

basename "$file

done

or

find ./ -type f | xargs -l1 -P<N> basename

Im trying to figure out a good way to determine, for some generic function, which of the above is the case (and in the "multiple inputs" case what the maximum number of inputs is) without requiring any user interaction.

Any good way to do this?

WHY

I put together a shell function for parallelizing loops called forkrun. Instead of forking off individual processes it initially forks off

N coprocs (for N worker threads) and then pipes data to them. Its usage is much the same as using parallel or xargs -P, though with fewer bells and whistles (it has flags that support changing the number of parallel worker threads and producing output that is ordered the same as the inputs).Right now it sends 1 input at a time to the worker coprocs, making it equivalent to

xargs -l1 -P<N>, an d comared to xargs -l1 -P<N> it is considerably faster (4-5x on my hardware). BUT, xargs -P<N> is still ~2x as fast (but only works on functions like sha256sum that accept many inputs). I could add a user parameter to set how many lines to group together and send to the worker coprocs (much like xargs -L parameter), but would like to, if possible, be able to dynamically choose an optimal value for this on a function-by-function basis.https://redd.it/10dtkao

@r_bash

GitHub

forkrun/forkrun.bash at main · jkool702/forkrun

runs multiple inputs through a noscript/function in parallel using bash coprocs - jkool702/forkrun

Is there a good way to programmatically determine how many inputs some function can support?

WHAT I WANT TO DO

Programs like

sha256sum file1 file2 file3 ...

or

find ./ -type f | xargs -P<N> sha256sum

Other programs, for example,

basename file1 file2 file3 ...

nor

find ./ -type f | xargs -P<N> basename

instead, you have to use

for file in file1 file2 file3 ...; do

basename "$file

done

or

find ./ -type f | xargs -l1 -P<N> basename

Im trying to figure out a good way to determine, for some generic function, which of the above is the case (and in the "multiple inputs" case what the maximum number of inputs is) without requiring any user interaction.

Any good way to do this?

WHY

I put together a shell function for parallelizing loops called forkrun. Instead of forking off individual processes it initially forks off

Right now it sends 1 input at a time to the worker coprocs, making it equivalent to

https://redd.it/10dtkao

@r_bash

WHAT I WANT TO DO

Programs like

sha256sum accept a list of inputs and will apply whatever the function does (e.g., compute the sha256sum) to each (non-option) input independently. You can do, for example:sha256sum file1 file2 file3 ...

or

find ./ -type f | xargs -P<N> sha256sum

Other programs, for example,

basename, only support 1 non-option input. you cant usebasename file1 file2 file3 ...

nor

find ./ -type f | xargs -P<N> basename

instead, you have to use

for file in file1 file2 file3 ...; do

basename "$file

done

or

find ./ -type f | xargs -l1 -P<N> basename

Im trying to figure out a good way to determine, for some generic function, which of the above is the case (and in the "multiple inputs" case what the maximum number of inputs is) without requiring any user interaction.

Any good way to do this?

WHY

I put together a shell function for parallelizing loops called forkrun. Instead of forking off individual processes it initially forks off

N coprocs (for N worker threads) and then pipes data to them. Its usage is much the same as using parallel or xargs -P, though with fewer bells and whistles (it has flags that support changing the number of parallel worker threads and producing output that is ordered the same as the inputs).Right now it sends 1 input at a time to the worker coprocs, making it equivalent to

xargs -l1 -P<N>, an d comared to xargs -l1 -P<N> it is considerably faster (4-5x on my hardware). BUT, xargs -P<N> is still ~2x as fast (but only works on functions like sha256sum that accept many inputs). I could add a user parameter to set how many lines to group together and send to the worker coprocs (much like xargs -L parameter), but would like to, if possible, be able to dynamically choose an optimal value for this on a function-by-function basis.https://redd.it/10dtkao

@r_bash

GitHub

forkrun/forkrun.bash at main · jkool702/forkrun

runs multiple inputs through a noscript/function in parallel using bash coprocs - jkool702/forkrun

Is there a good way to programmatically determine how many inputs some function can support?

WHAT I WANT TO DO

Programs like

sha256sum file1 file2 file3 ...

or

find ./ -type f | xargs -P<N> sha256sum

Other programs, for example,

basename file1 file2 file3 ...

nor

find ./ -type f | xargs -P<N> basename

instead, you have to use

for file in file1 file2 file3 ...; do

basename "$file

done

or

find ./ -type f | xargs -l1 -P<N> basename

Im trying to figure out a good way to determine, for some generic function, which of the above is the case (and in the "multiple inputs" case what the maximum number of inputs is) without requiring any user interaction.

Any good way to do this?

WHY

I put together a shell function for parallelizing loops called forkrun. Instead of forking off individual processes it initially forks off

Right now it sends 1 input at a time to the worker coprocs, making it equivalent to

https://redd.it/10dtkao

@r_bash

WHAT I WANT TO DO

Programs like

sha256sum accept a list of inputs and will apply whatever the function does (e.g., compute the sha256sum) to each (non-option) input independently. You can do, for example:sha256sum file1 file2 file3 ...

or

find ./ -type f | xargs -P<N> sha256sum

Other programs, for example,

basename, only support 1 non-option input. you cant usebasename file1 file2 file3 ...

nor

find ./ -type f | xargs -P<N> basename

instead, you have to use

for file in file1 file2 file3 ...; do

basename "$file

done

or

find ./ -type f | xargs -l1 -P<N> basename

Im trying to figure out a good way to determine, for some generic function, which of the above is the case (and in the "multiple inputs" case what the maximum number of inputs is) without requiring any user interaction.

Any good way to do this?

WHY

I put together a shell function for parallelizing loops called forkrun. Instead of forking off individual processes it initially forks off

N coprocs (for N worker threads) and then pipes data to them. Its usage is much the same as using parallel or xargs -P, though with fewer bells and whistles (it has flags that support changing the number of parallel worker threads and producing output that is ordered the same as the inputs).Right now it sends 1 input at a time to the worker coprocs, making it equivalent to

xargs -l1 -P<N>, an d comared to xargs -l1 -P<N> it is considerably faster (4-5x on my hardware). BUT, xargs -P<N> is still ~2x as fast (but only works on functions like sha256sum that accept many inputs). I could add a user parameter to set how many lines to group together and send to the worker coprocs (much like xargs -L parameter), but would like to, if possible, be able to dynamically choose an optimal value for this on a function-by-function basis.https://redd.it/10dtkao

@r_bash

GitHub

forkrun/forkrun.bash at main · jkool702/forkrun

runs multiple inputs through a noscript/function in parallel using bash coprocs - jkool702/forkrun

Is there a good way to programmatically determine how many inputs some function can support?

WHAT I WANT TO DO

Programs like

sha256sum file1 file2 file3 ...

or

find ./ -type f | xargs -P<N> sha256sum

Other programs, for example,

basename file1 file2 file3 ...

nor

find ./ -type f | xargs -P<N> basename

instead, you have to use

for file in file1 file2 file3 ...; do

basename "$file

done

or

find ./ -type f | xargs -l1 -P<N> basename

Im trying to figure out a good way to determine, for some generic function, which of the above is the case (and in the "multiple inputs" case what the maximum number of inputs is) without requiring any user interaction.

Any good way to do this?

WHY

I put together a shell function for parallelizing loops called forkrun. Instead of forking off individual processes it initially forks off

Right now it sends 1 input at a time to the worker coprocs, making it equivalent to

https://redd.it/10dtkao

@r_bash

WHAT I WANT TO DO

Programs like

sha256sum accept a list of inputs and will apply whatever the function does (e.g., compute the sha256sum) to each (non-option) input independently. You can do, for example:sha256sum file1 file2 file3 ...

or

find ./ -type f | xargs -P<N> sha256sum

Other programs, for example,

basename, only support 1 non-option input. you cant usebasename file1 file2 file3 ...

nor

find ./ -type f | xargs -P<N> basename

instead, you have to use

for file in file1 file2 file3 ...; do

basename "$file

done

or

find ./ -type f | xargs -l1 -P<N> basename

Im trying to figure out a good way to determine, for some generic function, which of the above is the case (and in the "multiple inputs" case what the maximum number of inputs is) without requiring any user interaction.

Any good way to do this?

WHY

I put together a shell function for parallelizing loops called forkrun. Instead of forking off individual processes it initially forks off

N coprocs (for N worker threads) and then pipes data to them. Its usage is much the same as using parallel or xargs -P, though with fewer bells and whistles (it has flags that support changing the number of parallel worker threads and producing output that is ordered the same as the inputs).Right now it sends 1 input at a time to the worker coprocs, making it equivalent to

xargs -l1 -P<N>, an d comared to xargs -l1 -P<N> it is considerably faster (4-5x on my hardware). BUT, xargs -P<N> is still ~2x as fast (but only works on functions like sha256sum that accept many inputs). I could add a user parameter to set how many lines to group together and send to the worker coprocs (much like xargs -L parameter), but would like to, if possible, be able to dynamically choose an optimal value for this on a function-by-function basis.https://redd.it/10dtkao

@r_bash

GitHub

forkrun/forkrun.bash at main · jkool702/forkrun

runs multiple inputs through a noscript/function in parallel using bash coprocs - jkool702/forkrun

Is there a good way to programmatically determine how many inputs some function can support?

WHAT I WANT TO DO

Programs like

sha256sum file1 file2 file3 ...

or

find ./ -type f | xargs -P<N> sha256sum

Other programs, for example,

basename file1 file2 file3 ...

nor

find ./ -type f | xargs -P<N> basename

instead, you have to use

for file in file1 file2 file3 ...; do

basename "$file

done

or

find ./ -type f | xargs -l1 -P<N> basename

Im trying to figure out a good way to determine, for some generic function, which of the above is the case (and in the "multiple inputs" case what the maximum number of inputs is) without requiring any user interaction.

Any good way to do this?

WHY

I put together a shell function for parallelizing loops called forkrun. Instead of forking off individual processes it initially forks off

Right now it sends 1 input at a time to the worker coprocs, making it equivalent to

https://redd.it/10dtkao

@r_bash

WHAT I WANT TO DO

Programs like

sha256sum accept a list of inputs and will apply whatever the function does (e.g., compute the sha256sum) to each (non-option) input independently. You can do, for example:sha256sum file1 file2 file3 ...

or

find ./ -type f | xargs -P<N> sha256sum

Other programs, for example,

basename, only support 1 non-option input. you cant usebasename file1 file2 file3 ...

nor

find ./ -type f | xargs -P<N> basename

instead, you have to use

for file in file1 file2 file3 ...; do

basename "$file

done

or

find ./ -type f | xargs -l1 -P<N> basename

Im trying to figure out a good way to determine, for some generic function, which of the above is the case (and in the "multiple inputs" case what the maximum number of inputs is) without requiring any user interaction.

Any good way to do this?

WHY

I put together a shell function for parallelizing loops called forkrun. Instead of forking off individual processes it initially forks off

N coprocs (for N worker threads) and then pipes data to them. Its usage is much the same as using parallel or xargs -P, though with fewer bells and whistles (it has flags that support changing the number of parallel worker threads and producing output that is ordered the same as the inputs).Right now it sends 1 input at a time to the worker coprocs, making it equivalent to

xargs -l1 -P<N>, an d comared to xargs -l1 -P<N> it is considerably faster (4-5x on my hardware). BUT, xargs -P<N> is still ~2x as fast (but only works on functions like sha256sum that accept many inputs). I could add a user parameter to set how many lines to group together and send to the worker coprocs (much like xargs -L parameter), but would like to, if possible, be able to dynamically choose an optimal value for this on a function-by-function basis.https://redd.it/10dtkao

@r_bash

GitHub

forkrun/forkrun.bash at main · jkool702/forkrun

runs multiple inputs through a noscript/function in parallel using bash coprocs - jkool702/forkrun

Bash Scripting training/certification

Hi everyone,

Could someone please let me know a possible training or certification that can be done to learn bash shell noscripting better? While a wealth of information is accessible online, I would like a course that can bring me and my team from zero to pros. We don't mind paying for it, but would like it to be recognized by the wider IT community; something like what CompTIA does.

Thanks!

https://redd.it/10dud81

@r_bash

Hi everyone,

Could someone please let me know a possible training or certification that can be done to learn bash shell noscripting better? While a wealth of information is accessible online, I would like a course that can bring me and my team from zero to pros. We don't mind paying for it, but would like it to be recognized by the wider IT community; something like what CompTIA does.

Thanks!

https://redd.it/10dud81

@r_bash

reddit

Bash Scripting training/certification

Hi everyone, Could someone please let me know a possible training or certification that can be done to learn bash shell noscripting better? While a...

Okay.. At a bit of a loss here. Numbers in variable names?....

This is not valid according to my shell

Solution:

Apparently variables cannot start with a number. Something I have never come accross and something that all the guides on variable names I have read trying to solve this issue failed to mention. I hope this helps someone... this issue has held me up for an hour and I am mad enough to stop for the day... I feel stupid now.

https://redd.it/10e583a

@r_bash

This is not valid according to my shell

2_4GHzAdapter=wlp35s0But this isGHzAdapter=wlp35s0I have for years used numbers in variable names but my system has decided not to allow it. It was my understanding that underscores and numbers were fine but any other symbols aside numbers, letters, and underscores were not... Or is my memory a lie..?Solution:

Apparently variables cannot start with a number. Something I have never come accross and something that all the guides on variable names I have read trying to solve this issue failed to mention. I hope this helps someone... this issue has held me up for an hour and I am mad enough to stop for the day... I feel stupid now.

https://redd.it/10e583a

@r_bash

reddit

Okay.. At a bit of a loss here. Numbers in variable names?....

This is not valid according to my shell`2_4GHzAdapter=wlp35s0`But this is`GHzAdapter=wlp35s0`I have for years used numbers in variable names but...

Finding files based on number inside

HW assignment: I have 100 files, all named accordingly from 1 to 100. In each of the files, there's a random number. I need to find 15 files with largest random numbers then write the names of the said files inside some .txt file.

I have tried adding the filename into the file itself upon randomizing the numbers. Something like this:

That way I could somehow sort all of these random numbers into a separate .txt file without losing the names of the files the numbers initially belonged to. Then I could maybe use head command to find the values I need, but I don't understand the logistics of it. Maybe I don't need to write the filename inside the file itself in the first place? I can't think of any convenient way to do this.

https://redd.it/10e9wjz

@r_bash

HW assignment: I have 100 files, all named accordingly from 1 to 100. In each of the files, there's a random number. I need to find 15 files with largest random numbers then write the names of the said files inside some .txt file.

I have tried adding the filename into the file itself upon randomizing the numbers. Something like this:

for x in $(1 100)doshuf -i 1-10000 -n 1 -o $x.txtecho "$x.txt" >> $x.txtdoneThat way I could somehow sort all of these random numbers into a separate .txt file without losing the names of the files the numbers initially belonged to. Then I could maybe use head command to find the values I need, but I don't understand the logistics of it. Maybe I don't need to write the filename inside the file itself in the first place? I can't think of any convenient way to do this.

https://redd.it/10e9wjz

@r_bash

reddit

Finding files based on number inside

HW assignment: I have 100 files, all named accordingly from 1 to 100. In each of the files, there's a random number. I need to find 15 files with...

Creating a grep pattern that would work like this: <Keyword><any amount of special characters or lowercase leters><any numbers and uppercase letters>

I wanted to create a scipt that can find Invoice number from text. It basically converts PDF to txt and then searches for company name and Invoice number. And I have a problem with invoice number.

​

For now I used this to get the invoice number (output.txt is the converted PDF)

​

```

\#!/bin/bash

\#some code before

​

keyword1="Rechnung"

keyword2="Invoice"

​

\# Use grep to search for the pattern in the file and extract only the matched (non-empty) parts of a matching line

output=$(grep -ioE "$keyword1[\^0-9\]*([A-Z\]*[0-9\]+)|$keyword2[\^0-9\]*([A-Z\]*[0-9\]+)" "$path/$fileName/output.txt")

​

​

\# Use sed to extract the first set of letters and numbers that comes after keyword

result_array=($(echo $output | awk -F '[\^[:alnum:\]\]' '{for (i=1; i<=NF; i++) if ($i \~ /[A-Z\]*[0-9\]+/) print $i}'))

​

\#some code after, where it finds the longest elemnt in the array

```

THe $output get the line with keyword, and then $result_array gets only the number

​

​

And it works if, for example the invoice number itself only has number (91895851851) or if it has letter at the start (RE19515515). But some of the invoices have letter beetween the bumbers, for example AA1111AA11. And if this is the case, rhis noscript will only take AA1111 and leave everything else.

​

So I need a pattern that would work like this:

<Keyword><any amount of spaces, special characters or lowercase leters><any numbers and uppercase letters>

And it should return only the invoice number

​

It should work on all cases below:

​

"invoice nunmber is RE5050AE5050" ==> "RE5050AE5050"

​

"invoiceNumber: - . | RE5050AE5050" ==> "RE5050AE5050"

​

and so on.

https://redd.it/10e8mh7

@r_bash

I wanted to create a scipt that can find Invoice number from text. It basically converts PDF to txt and then searches for company name and Invoice number. And I have a problem with invoice number.

​

For now I used this to get the invoice number (output.txt is the converted PDF)

​

```

\#!/bin/bash

\#some code before

​

keyword1="Rechnung"

keyword2="Invoice"

​

\# Use grep to search for the pattern in the file and extract only the matched (non-empty) parts of a matching line

output=$(grep -ioE "$keyword1[\^0-9\]*([A-Z\]*[0-9\]+)|$keyword2[\^0-9\]*([A-Z\]*[0-9\]+)" "$path/$fileName/output.txt")

​

​

\# Use sed to extract the first set of letters and numbers that comes after keyword

result_array=($(echo $output | awk -F '[\^[:alnum:\]\]' '{for (i=1; i<=NF; i++) if ($i \~ /[A-Z\]*[0-9\]+/) print $i}'))

​

\#some code after, where it finds the longest elemnt in the array

```

THe $output get the line with keyword, and then $result_array gets only the number

​

​

And it works if, for example the invoice number itself only has number (91895851851) or if it has letter at the start (RE19515515). But some of the invoices have letter beetween the bumbers, for example AA1111AA11. And if this is the case, rhis noscript will only take AA1111 and leave everything else.

​

So I need a pattern that would work like this:

<Keyword><any amount of spaces, special characters or lowercase leters><any numbers and uppercase letters>

And it should return only the invoice number

​

It should work on all cases below:

​

"invoice nunmber is RE5050AE5050" ==> "RE5050AE5050"

​

"invoiceNumber: - . | RE5050AE5050" ==> "RE5050AE5050"

​

and so on.

https://redd.it/10e8mh7

@r_bash

reddit

Creating a grep pattern that would work like this: <Keyword><any...

I wanted to create a scipt that can find Invoice number from text. It basically converts PDF to txt and then searches for company name and Invoice...

Unix/Linux Command Combinations That Every Developer Should Know

https://levelup.gitconnected.com/unix-linux-command-combinations-that-every-developer-should-know-9ae475cf6568?sk=8f264980b4cb013c5536e23387c32275

https://redd.it/10efm3n

@r_bash

https://levelup.gitconnected.com/unix-linux-command-combinations-that-every-developer-should-know-9ae475cf6568?sk=8f264980b4cb013c5536e23387c32275

https://redd.it/10efm3n

@r_bash

Medium

Unix/Linux Command Combinations That Every Developer Should Know

Save your time by using these command combinations in your terminal and noscripts

vim in a while loop gets remaining lines as a buffer... can anyone help explain?

So I'm trying to edit a bunch of things, one at a time slowly, in a loop. I'm doing this with a

Here's exactly what I'm doing, in a simple/reproducible case:

# first line for r/bash folks who might not know about printf overloading

$ while read f; do echo "got '$f'" ;done < <(printf '%s\n' foo bar baz)

got 'foo'

got 'bar'

got 'baz'

# Okay now the case I'm asking for help with:

$ while read f; do vim "$f" ;done < <(printf '%s\n' foo bar baz)

expected: when I run the above, I'm expecting it's equivalent to doing:

# opens vim for each file, waits for vim to exit, then opens vim for the next...

for f in foo bar baz; do vim "$f"; done

actual/problem: strangely I find myself on a blank vim buffer (

:ls

1 %a + "No Name" line 1

2 "foo" line 0

I'm expecting vim to just have opened with a single buffer: editing

### Debugging

So I'm trying to reason about how it is that vim is clearly getting ... rr... more information. Here's what I tried:

note 1: print argument myself, to sanity check what's being passed to my command; see dummy

$ function argprinter() { printf 'arg: "%s"\n' $@; }

$ while read f; do argprinter "$f" ;done < <(printf '%s\n' foo bar baz)

arg: "foo"

arg: "bar"

arg: "baz"

note 2: So the above seems right, but I noticed if I do

https://redd.it/10egdhk

@r_bash

So I'm trying to edit a bunch of things, one at a time slowly, in a loop. I'm doing this with a

while loop (see wooledge's explainer on this `while` loop pattern and ProcessSubstitution). Problem: I'm seeing that vim only opens correctly with a for loop but not with a while loop. Can someone help point out what's happening here with the while loop and how to fix it properly?Here's exactly what I'm doing, in a simple/reproducible case:

# first line for r/bash folks who might not know about printf overloading

$ while read f; do echo "got '$f'" ;done < <(printf '%s\n' foo bar baz)

got 'foo'

got 'bar'

got 'baz'

# Okay now the case I'm asking for help with:

$ while read f; do vim "$f" ;done < <(printf '%s\n' foo bar baz)

expected: when I run the above, I'm expecting it's equivalent to doing:

# opens vim for each file, waits for vim to exit, then opens vim for the next...

for f in foo bar baz; do vim "$f"; done

actual/problem: strangely I find myself on a blank vim buffer (

[No Name]) with two lines bar followed by baz; If I inspect my buffers (to see if I got any reference to foo file, I do see it in the second buffer::ls

1 %a + "No Name" line 1

2 "foo" line 0

I'm expecting vim to just have opened with a single buffer: editing

foo file. Anyone know why this isn't happening?### Debugging

So I'm trying to reason about how it is that vim is clearly getting ... rr... more information. Here's what I tried:

note 1: print argument myself, to sanity check what's being passed to my command; see dummy

argprinter func:$ function argprinter() { printf 'arg: "%s"\n' $@; }

$ while read f; do argprinter "$f" ;done < <(printf '%s\n' foo bar baz)

arg: "foo"

arg: "bar"

arg: "baz"

note 2: So the above seems right, but I noticed if I do

:ar in vim I only see [foo] as expected. So it's just :ls buffer listing that's a mystery to me.https://redd.it/10egdhk

@r_bash

What tool is it ?

I changed my computer and re-install everything from scratch a month ago.

But I am missing a command/tool/setting or whatever that I had on the old computer that is related to browsing/scrolling up/down the output in terminal . There was a key bind that makes the cursor jump to the begging of the previous command in the terminal. I am not talking of a simple Page Up, the thing knows exactly how many pages to scroll up on terminal output to the beginning of previous command.

Which is this one ?

I really don't remember if it is something specific to bash (I only use bash) or it is something related to KDE/konsole....

https://redd.it/10egz0w

@r_bash

I changed my computer and re-install everything from scratch a month ago.

But I am missing a command/tool/setting or whatever that I had on the old computer that is related to browsing/scrolling up/down the output in terminal . There was a key bind that makes the cursor jump to the begging of the previous command in the terminal. I am not talking of a simple Page Up, the thing knows exactly how many pages to scroll up on terminal output to the beginning of previous command.

Which is this one ?

I really don't remember if it is something specific to bash (I only use bash) or it is something related to KDE/konsole....

https://redd.it/10egz0w

@r_bash

reddit

What tool is it ?

I changed my computer and re-install everything from scratch a month ago. But I am missing a command/tool/setting or whatever that I had on the...

Name of that utility which generates DAGs from text to SVG?

I've forgotten the name of the utility which generates DAGs from text, can you remember it?

You can give it a mydag.txt like:

In fact I'm very confident that was the basic syntax.

And then you can call:

And a DAG will be drawn in SVG format

I'm pretty sure this image used the same utlity because it matches the default style exactly:

https://en.wikipedia.org/wiki/Directed\_acyclic\_graph#/media/File:Tred-G.noscript

(p.s its not gnuplot as far as I can remember)

https://redd.it/10eraf3

@r_bash

I've forgotten the name of the utility which generates DAGs from text, can you remember it?

You can give it a mydag.txt like:

a -> b

b -> c

In fact I'm very confident that was the basic syntax.

And then you can call:

cat mydag.txt | program -t noscript -o out.noscript

And a DAG will be drawn in SVG format

I'm pretty sure this image used the same utlity because it matches the default style exactly:

https://en.wikipedia.org/wiki/Directed\_acyclic\_graph#/media/File:Tred-G.noscript

(p.s its not gnuplot as far as I can remember)

https://redd.it/10eraf3

@r_bash

trying to delete some apks and directories that malware placed on my phone keep getting access denied

https://redd.it/10evlv8

@r_bash

https://redd.it/10evlv8

@r_bash

reddit

trying to delete some apks and directories that malware placed on...

Posted in r/bash by u/TryingToLearnBash • 1 point and 0 comments